Models / Nous ResearchHermes / / Nous Hermes 2 - Mixtral 8x7B-DPO API

Nous Hermes 2 - Mixtral 8x7B-DPO API

LLM

Flagship Nous Research MoE model trained on 1M+ GPT-4 and high-quality open dataset entries, excelling across diverse tasks.

Try our Nous Hermes API

API Usage

How to use Nous Hermes 2 - Mixtral 8x7B-DPOModel CardPrompting Nous Hermes 2 - Mixtral 8x7B-DPOApplications & Use CasesNous Hermes 2 - Mixtral 8x7B-DPO API Usage

Endpoint

NousResearch/Nous-Hermes-2-Mixtral-8x7B-DPO

curl -X POST "https://api.together.xyz/v1/chat/completions" \

-H "Authorization: Bearer $TOGETHER_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"model": "NousResearch/Nous-Hermes-2-Mixtral-8x7B-DPO",

"messages": [

{

"role": "user",

"content": "What are some fun things to do in New York?"

}

]

}'

curl -X POST "https://api.together.xyz/v1/images/generations" \

-H "Authorization: Bearer $TOGETHER_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"model": "NousResearch/Nous-Hermes-2-Mixtral-8x7B-DPO",

"prompt": "Draw an anime style version of this image.",

"width": 1024,

"height": 768,

"steps": 28,

"n": 1,

"response_format": "url",

"image_url": "https://huggingface.co/datasets/patrickvonplaten/random_img/resolve/main/yosemite.png"

}'

curl -X POST https://api.together.xyz/v1/chat/completions \

-H "Content-Type: application/json" \

-H "Authorization: Bearer $TOGETHER_API_KEY" \

-d '{

"model": "NousResearch/Nous-Hermes-2-Mixtral-8x7B-DPO",

"messages": [{

"role": "user",

"content": [

{"type": "text", "text": "Describe what you see in this image."},

{"type": "image_url", "image_url": {"url": "https://huggingface.co/datasets/patrickvonplaten/random_img/resolve/main/yosemite.png"}}

]

}],

"max_tokens": 512

}'

curl -X POST https://api.together.xyz/v1/chat/completions \

-H "Content-Type: application/json" \

-H "Authorization: Bearer $TOGETHER_API_KEY" \

-d '{

"model": "NousResearch/Nous-Hermes-2-Mixtral-8x7B-DPO",

"messages": [{

"role": "user",

"content": "Given two binary strings `a` and `b`, return their sum as a binary string"

}]

}'

curl -X POST https://api.together.xyz/v1/rerank \

-H "Content-Type: application/json" \

-H "Authorization: Bearer $TOGETHER_API_KEY" \

-d '{

"model": "NousResearch/Nous-Hermes-2-Mixtral-8x7B-DPO",

"query": "What animals can I find near Peru?",

"documents": [

"The giant panda (Ailuropoda melanoleuca), also known as the panda bear or simply panda, is a bear species endemic to China.",

"The llama is a domesticated South American camelid, widely used as a meat and pack animal by Andean cultures since the pre-Columbian era.",

"The wild Bactrian camel (Camelus ferus) is an endangered species of camel endemic to Northwest China and southwestern Mongolia.",

"The guanaco is a camelid native to South America, closely related to the llama. Guanacos are one of two wild South American camelids; the other species is the vicuña, which lives at higher elevations."

],

"top_n": 2

}'

curl -X POST https://api.together.xyz/v1/embeddings \

-H "Authorization: Bearer $TOGETHER_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"input": "Our solar system orbits the Milky Way galaxy at about 515,000 mph.",

"model": "NousResearch/Nous-Hermes-2-Mixtral-8x7B-DPO"

}'

curl -X POST https://api.together.xyz/v1/completions \

-H "Content-Type: application/json" \

-H "Authorization: Bearer $TOGETHER_API_KEY" \

-d '{

"model": "meta-llama/Llama-4-Maverick-17B-128E-Instruct-FP8",

"prompt": "A horse is a horse",

"max_tokens": 32,

"temperature": 0.1,

"safety_model": "NousResearch/Nous-Hermes-2-Mixtral-8x7B-DPO"

}'

curl --location 'https://api.together.ai/v1/audio/generations' \

--header 'Content-Type: application/json' \

--header 'Authorization: Bearer $TOGETHER_API_KEY' \

--output speech.mp3 \

--data '{

"input": "Today is a wonderful day to build something people love!",

"voice": "helpful woman",

"response_format": "mp3",

"sample_rate": 44100,

"stream": false,

"model": "NousResearch/Nous-Hermes-2-Mixtral-8x7B-DPO"

}'

curl -X POST "https://api.together.xyz/v1/audio/transcriptions" \

-H "Authorization: Bearer $TOGETHER_API_KEY" \

-F "model=NousResearch/Nous-Hermes-2-Mixtral-8x7B-DPO" \

-F "language=en" \

-F "response_format=json" \

-F "timestamp_granularities=segment"

from together import Together

client = Together()

response = client.chat.completions.create(

model="NousResearch/Nous-Hermes-2-Mixtral-8x7B-DPO",

messages=[

{

"role": "user",

"content": "What are some fun things to do in New York?"

}

]

)

print(response.choices[0].message.content)

from together import Together

client = Together()

imageCompletion = client.images.generate(

model="NousResearch/Nous-Hermes-2-Mixtral-8x7B-DPO",

width=1024,

height=768,

steps=28,

prompt="Draw an anime style version of this image.",

image_url="https://huggingface.co/datasets/patrickvonplaten/random_img/resolve/main/yosemite.png",

)

print(imageCompletion.data[0].url)

from together import Together

client = Together()

response = client.chat.completions.create(

model="NousResearch/Nous-Hermes-2-Mixtral-8x7B-DPO",

messages=[{

"role": "user",

"content": [

{"type": "text", "text": "Describe what you see in this image."},

{"type": "image_url", "image_url": {"url": "https://huggingface.co/datasets/patrickvonplaten/random_img/resolve/main/yosemite.png"}}

]

}]

)

print(response.choices[0].message.content)

from together import Together

client = Together()

response = client.chat.completions.create(

model="NousResearch/Nous-Hermes-2-Mixtral-8x7B-DPO",

messages=[

{

"role": "user",

"content": "Given two binary strings `a` and `b`, return their sum as a binary string"

}

],

)

print(response.choices[0].message.content)

from together import Together

client = Together()

query = "What animals can I find near Peru?"

documents = [

"The giant panda (Ailuropoda melanoleuca), also known as the panda bear or simply panda, is a bear species endemic to China.",

"The llama is a domesticated South American camelid, widely used as a meat and pack animal by Andean cultures since the pre-Columbian era.",

"The wild Bactrian camel (Camelus ferus) is an endangered species of camel endemic to Northwest China and southwestern Mongolia.",

"The guanaco is a camelid native to South America, closely related to the llama. Guanacos are one of two wild South American camelids; the other species is the vicuña, which lives at higher elevations.",

]

response = client.rerank.create(

model="NousResearch/Nous-Hermes-2-Mixtral-8x7B-DPO",

query=query,

documents=documents,

top_n=2

)

for result in response.results:

print(f"Relevance Score: {result.relevance_score}")

from together import Together

client = Together()

response = client.embeddings.create(

model = "NousResearch/Nous-Hermes-2-Mixtral-8x7B-DPO",

input = "Our solar system orbits the Milky Way galaxy at about 515,000 mph"

)

from together import Together

client = Together()

response = client.completions.create(

model="meta-llama/Llama-4-Maverick-17B-128E-Instruct-FP8",

prompt="A horse is a horse",

max_tokens=32,

temperature=0.1,

safety_model="NousResearch/Nous-Hermes-2-Mixtral-8x7B-DPO",

)

print(response.choices[0].text)

from together import Together

client = Together()

speech_file_path = "speech.mp3"

response = client.audio.speech.create(

model="NousResearch/Nous-Hermes-2-Mixtral-8x7B-DPO",

input="Today is a wonderful day to build something people love!",

voice="helpful woman",

)

response.stream_to_file(speech_file_path)

from together import Together

client = Together()

response = client.audio.transcribe(

model="NousResearch/Nous-Hermes-2-Mixtral-8x7B-DPO",

language="en",

response_format="json",

timestamp_granularities="segment"

)

print(response.text)

import Together from 'together-ai';

const together = new Together();

const completion = await together.chat.completions.create({

model: 'NousResearch/Nous-Hermes-2-Mixtral-8x7B-DPO',

messages: [

{

role: 'user',

content: 'What are some fun things to do in New York?'

}

],

});

console.log(completion.choices[0].message.content);

import Together from "together-ai";

const together = new Together();

async function main() {

const response = await together.images.create({

model: "NousResearch/Nous-Hermes-2-Mixtral-8x7B-DPO",

width: 1024,

height: 1024,

steps: 28,

prompt: "Draw an anime style version of this image.",

image_url: "https://huggingface.co/datasets/patrickvonplaten/random_img/resolve/main/yosemite.png",

});

console.log(response.data[0].url);

}

main();

import Together from "together-ai";

const together = new Together();

const imageUrl = "https://huggingface.co/datasets/patrickvonplaten/random_img/resolve/main/yosemite.png";

async function main() {

const response = await together.chat.completions.create({

model: "NousResearch/Nous-Hermes-2-Mixtral-8x7B-DPO",

messages: [{

role: "user",

content: [

{ type: "text", text: "Describe what you see in this image." },

{ type: "image_url", image_url: { url: imageUrl } }

]

}]

});

console.log(response.choices[0]?.message?.content);

}

main();

import Together from "together-ai";

const together = new Together();

async function main() {

const response = await together.chat.completions.create({

model: "NousResearch/Nous-Hermes-2-Mixtral-8x7B-DPO",

messages: [{

role: "user",

content: "Given two binary strings `a` and `b`, return their sum as a binary string"

}]

});

console.log(response.choices[0]?.message?.content);

}

main();

import Together from "together-ai";

const together = new Together();

const query = "What animals can I find near Peru?";

const documents = [

"The giant panda (Ailuropoda melanoleuca), also known as the panda bear or simply panda, is a bear species endemic to China.",

"The llama is a domesticated South American camelid, widely used as a meat and pack animal by Andean cultures since the pre-Columbian era.",

"The wild Bactrian camel (Camelus ferus) is an endangered species of camel endemic to Northwest China and southwestern Mongolia.",

"The guanaco is a camelid native to South America, closely related to the llama. Guanacos are one of two wild South American camelids; the other species is the vicuña, which lives at higher elevations."

];

async function main() {

const response = await together.rerank.create({

model: "NousResearch/Nous-Hermes-2-Mixtral-8x7B-DPO",

query: query,

documents: documents,

top_n: 2

});

for (const result of response.results) {

console.log(`Relevance Score: ${result.relevance_score}`);

}

}

main();

import Together from "together-ai";

const together = new Together();

const response = await client.embeddings.create({

model: 'NousResearch/Nous-Hermes-2-Mixtral-8x7B-DPO',

input: 'Our solar system orbits the Milky Way galaxy at about 515,000 mph',

});

import Together from "together-ai";

const together = new Together();

async function main() {

const response = await together.completions.create({

model: "meta-llama/Llama-4-Maverick-17B-128E-Instruct-FP8",

prompt: "A horse is a horse",

max_tokens: 32,

temperature: 0.1,

safety_model: "NousResearch/Nous-Hermes-2-Mixtral-8x7B-DPO"

});

console.log(response.choices[0]?.text);

}

main();

import Together from 'together-ai';

const together = new Together();

async function generateAudio() {

const res = await together.audio.create({

input: 'Today is a wonderful day to build something people love!',

voice: 'helpful woman',

response_format: 'mp3',

sample_rate: 44100,

stream: false,

model: 'NousResearch/Nous-Hermes-2-Mixtral-8x7B-DPO',

});

if (res.body) {

console.log(res.body);

const nodeStream = Readable.from(res.body as ReadableStream);

const fileStream = createWriteStream('./speech.mp3');

nodeStream.pipe(fileStream);

}

}

generateAudio();

import Together from "together-ai";

const together = new Together();

const response = await together.audio.transcriptions.create(

model: "NousResearch/Nous-Hermes-2-Mixtral-8x7B-DPO",

language: "en",

response_format: "json",

timestamp_granularities: "segment"

});

console.log(response)

How to use Nous Hermes 2 - Mixtral 8x7B-DPO

Model details

Prompting Nous Hermes 2 - Mixtral 8x7B-DPO

Applications & Use Cases

Model Provider:

Nous Research

Type:

Chat

Variant:

Parameters:

46B

Deployment:

✔ Serverless

✔ On-Demand Dedicated

✔ Monthly Reserved

Quantization

FP16

Context length:

32768

Pricing:

$0.60

Check pricing

Run in playground

Deploy model

Quickstart docs

Quickstart docs

Serverless

Monthly Reserved

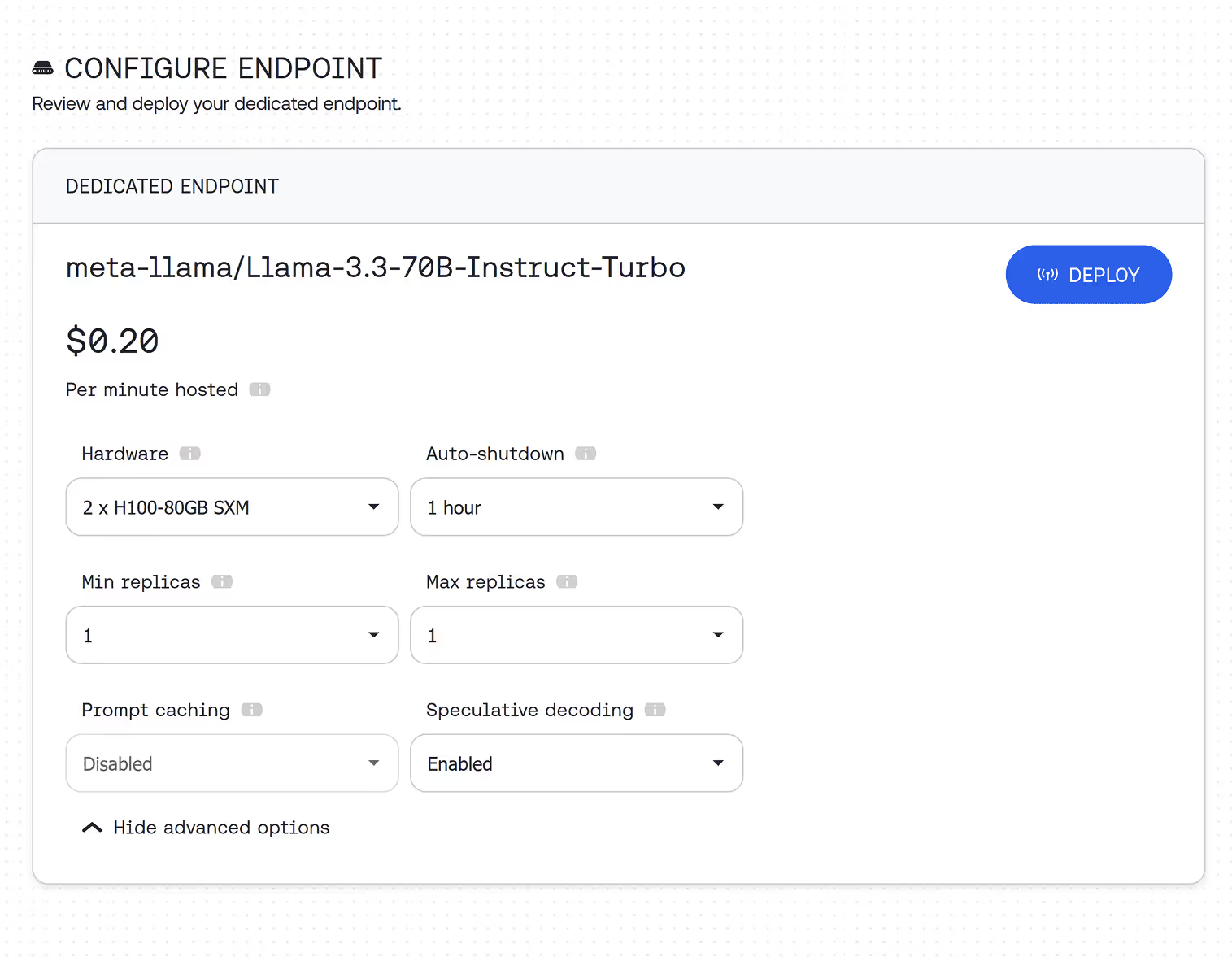

Looking for production scale? Deploy on a dedicated endpoint

Deploy Nous Hermes 2 - Mixtral 8x7B-DPO on a dedicated endpoint with custom hardware configuration, as many instances as you need, and auto-scaling.