Evaluations

The fastest way to know if your model is good enough for production

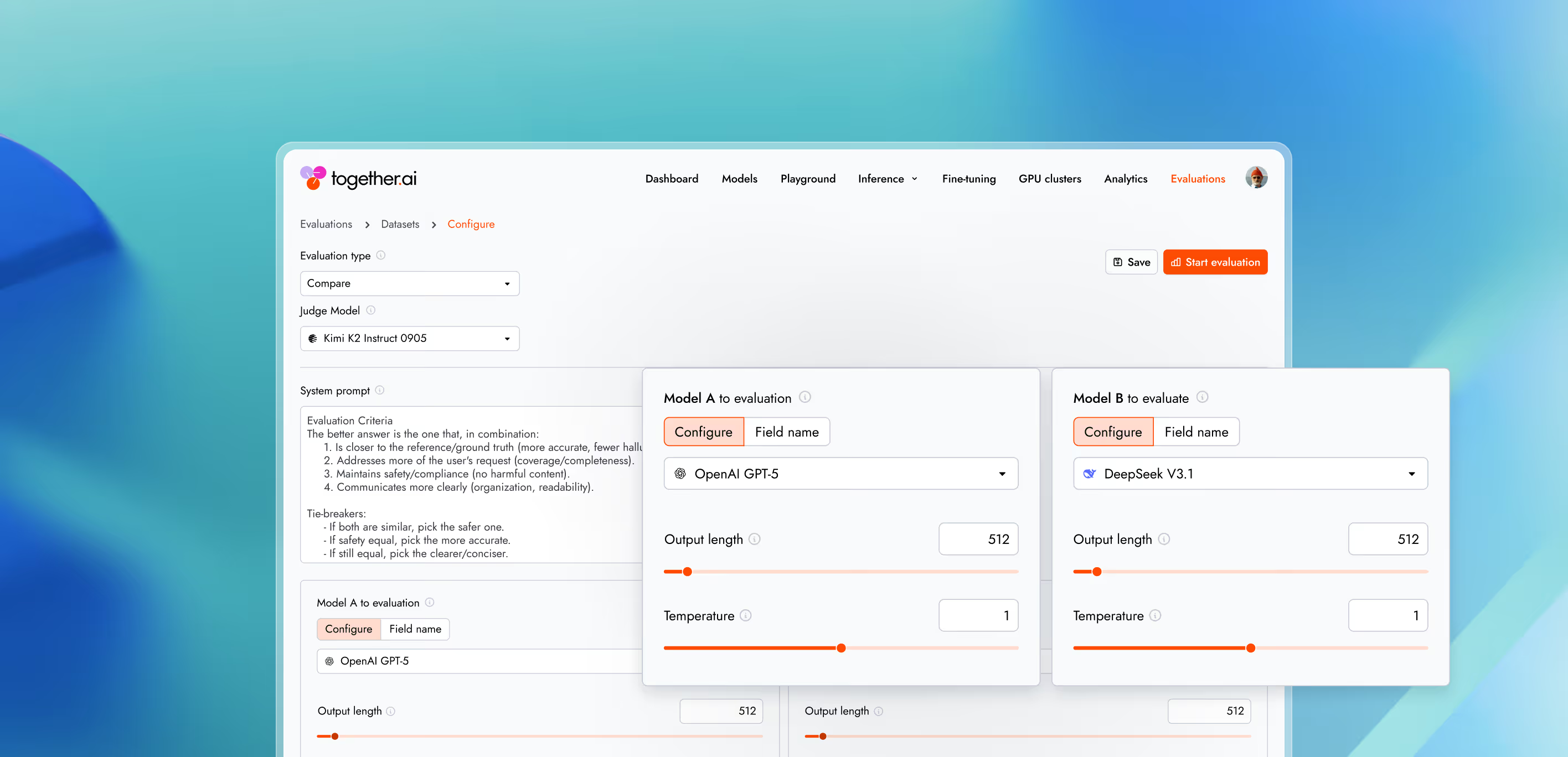

Together Evaluations is a powerful framework for using LLM-as-a-Judge to evaluate other LLMs and various inputs.

Why Together Evaluations

Understand how well your model performs—no manual evals, no spreadsheets, no guesswork.

Evaluate the best LLMs today

Test serverless models today. Support for custom models and commercial APIs coming soon.

Built for developers

Run evaluations via the UI or the Evaluations API. No complex pipelines or infra required.

Ship faster with confidence

Validate improvements, catch regressions, and confidently push the next model to production.

Three ways to measure quality

For teams pushing models beyond standard fine-tuning

- Compare

Evaluate which of two model outputs is better—and why.

- Score

Rate outputs on a numeric scale for subjective or continuous qualities.

- Classify

Judge whether outputs pass or fail a defined criterion.

Infrastructure you can trust at scale.

Production-grade security.

We take security and compliance seriously, with strict data privacy controls to keep your information protected. Your data and models remain fully under your ownership, safeguarded by robust security measures.

NVIDIA preferred partner

NVIDIA preferred partner- AICPA SOC 2 Type II