Serverless Inference

The fastest way to run open‑source models on demand

High-performance inference, powered by our in-house research. No infrastructure to manage, no long-term commitments.

Serverless inference on Together AI

Access all the top open-source models in one place.

Up to 2.75x faster inference

Powered by next-gen GPUs and key innovations, we deliver inference speeds around 2x faster for the next fastest provider.

Every modality, one API

Text, image, video, code, and voice. Access the full generative AI stack without stitching together multiple providers.

Built on cutting-edge systems research

Inference performance is driven by continuous optimization across kernels, scheduling, and runtime systems.

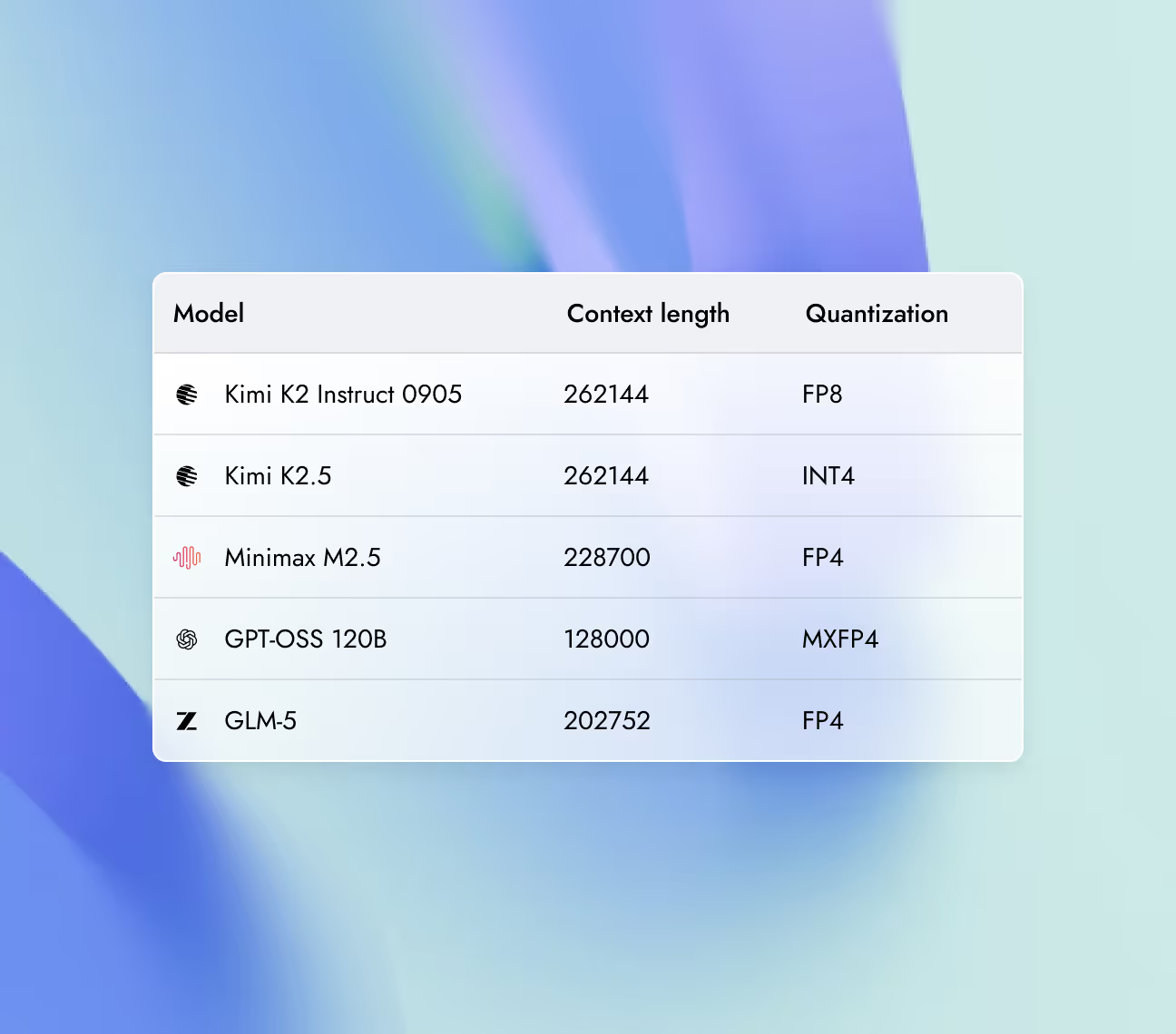

Build with leading models

Explore top-performing models across text, image, video, code, and voice.

Key capabilities, purpose built for AI natives

Scale from self-serve instant clusters to thousands of GPUs, all optimized for better performance with Together Kernel Collection.

Reduce end-to-end latency by predicting and validating multiple tokens per step instead of decoding strictly sequentially. AdapTive-LeArning Speculative System (ATLAS) learns from production traffic to further accelerate inference.

Same API, better models. No code changes required. Drop in your API key and access hundreds of open-source models through the same interface you're already using.

Run quantized models at full quality — our intelligent quantization reduces compute costs and improves speed without sacrificing output accuracy.

Research-optimized, best-in-class performance

We achieved up to 2x faster serverless inference for the most demanding LLMs, including GPT-OSS, Qwen, Kimi, and DeepSeek.

Output speed: gpt-oss-20B (low) providers

Together AI

Vexteer

Lightning

DatabricKs

Nebius base

Novita

Amazon

Cloudfare

Hyperbolic

Together AI vs other providers

2x faster

We achieved nearly 2x faster serverless inference performance for gpt-oss-20B when compared with the next fastest provider.

learn moreOutput Speed: Qwen3 235B 2507 providers

Together AI (FP8)

Amazon

Lightning

DatabricKs

Nebius base

Novita

Amazon

Cloudfare

Hyperbolic

Hyperbolic

Hyperbolic

Hyperbolic

Together ai vs other providers

2.75x faster

We achieved nearly 2x faster serverless inference performance for gpt-oss-20B when compared with the next fastest provider.

learn moreOutput Speed: Kimi K2 0905 providers

Together AI

Fireworks

Baseten (FP4)

Parasail

Deepinfra

Novita

Together ai vs other providers

65% faster

We achieved over 65% faster serverless inference performance forKimi-K2-0905 when compared with the next fastest provider.

learn moreOutput Speed: DeepSeek V3.1 providers

Together AI

Fireworks

Baseten (FP4)

Vertex

Parasail(FP8)

Lightning AI

Amazon

GMI (FP8)

Novita

Deepinfra(FP4)

Together ai vs other providers

10% faster

We achieved over 10% faster serverless inference performance forDeepSeek-V3.1 when compared with the next fastest provider.

learn moreOutput Speed: DeepSeek R1 0528 providers

Together AI

Neibus fast(FP4)

Fireworks Fast

Vertex

Azure

Together.ai (Throughput)

Hyperbolic

Deepinfra

Novita

Parasail

Nebius

Together ai vs other providers

13% faster

We achieved over 13% faster serverless inference performance forDeepSeek-R1-0528 when compared with the next fastest provider.

learn more

Deployment options

Run models using different deployment options depending on latency needs, traffic patterns, and infrastructure control.

Real-time

A fully managed inference API that automatically scales with request volume.

Best for

Batch

Process massive workloads of up to 30 billion tokens asynchronously, at up to 50% less cost.

Best for

Dedicated Model Inference

An inference endpoint backed by reserved, isolated compute resources and the Together AI inference engine.

Best for

Dedicated Container Inference

Run inference with your own engine and model on fully-managed, scalable infrastructure.

Best for

Production-grade

security and data privacy

We take security and compliance seriously, with strict data privacy controls to keep your information protected. Your data and models remain fully under your ownership, safeguarded by robust security measures.

NVIDIA preferred partner

NVIDIA preferred partner- AICPA SOC 2 Type II