Dedicated Container Inference

GPU infrastructure purpose-built for generative media workloads

Deploy video, audio, and avatar generation models on the AI Native Cloud.

Why Dedicated Container Inference with Together AI?

Designed for production workloads that need consistent performance and operational control.

Predictable pricing, leading unit economics

Leading performance lowers your effective GPU cost. Rapid autoscaling ensures you only pay for the capacity you use. Train on Together and deploy to dedicated containers without artifact transfer fees.

Research-backed speed

Hands-on partnership to profile and optimize your models. Achieve up to 2.6x speedup for production video generation workloads.

Made for massive surges

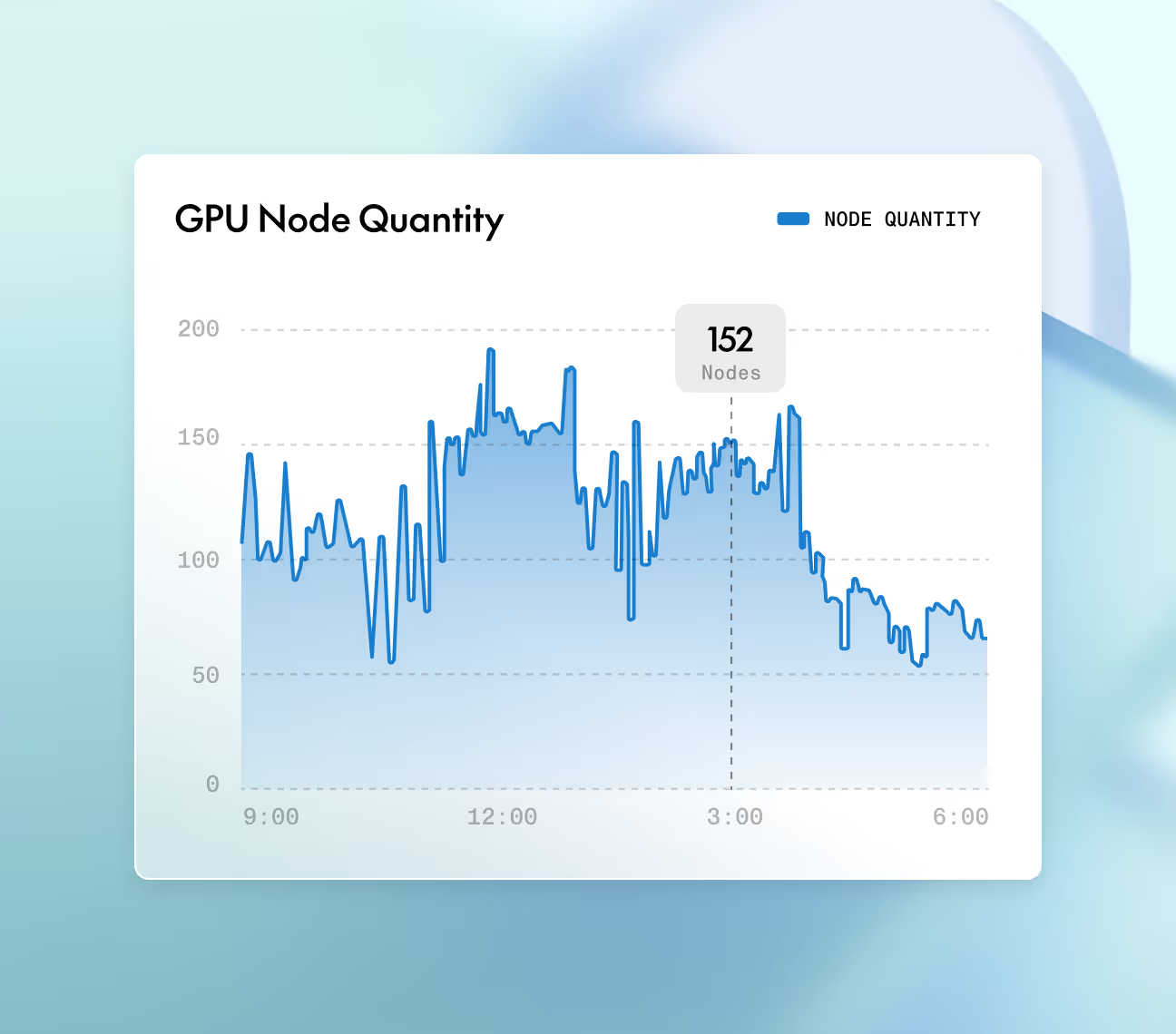

Proven elastic autoscaling during viral moments supports 10x plus traffic surges. Priority based queuing ensures paying customers never wait, even when free trial traffic spikes.

Key capabilities, purpose built for AI natives

From hardware to inference stack, every capability is optimized to get more out of every request.

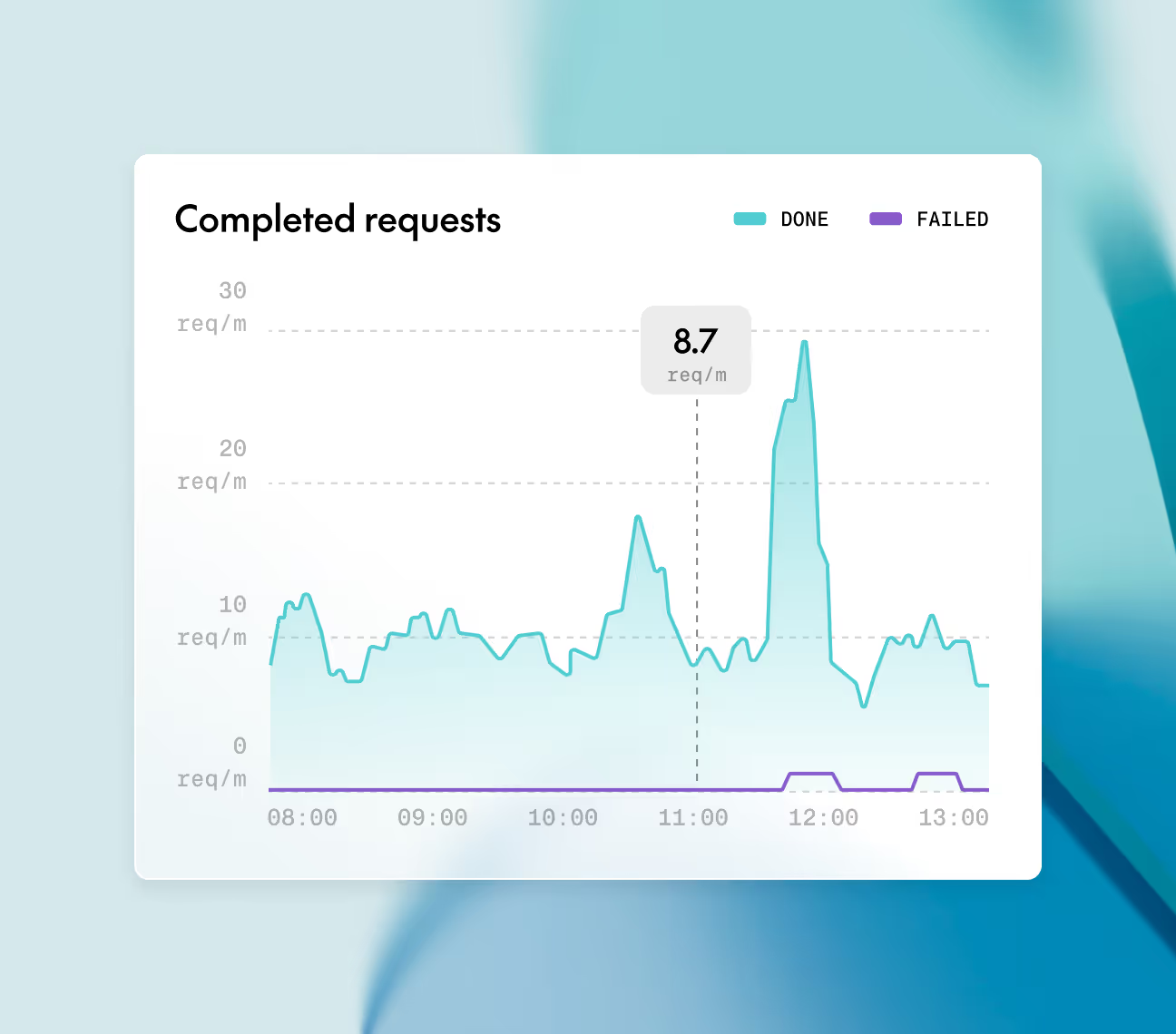

Monitor inference jobs, GPU utilization, queue depth, and latency metrics in real time. Full observability for production debugging.

Autoscale rapidly to handle 10x traffic surges with zero failures. Keep critical workloads running via priority-based queuing.

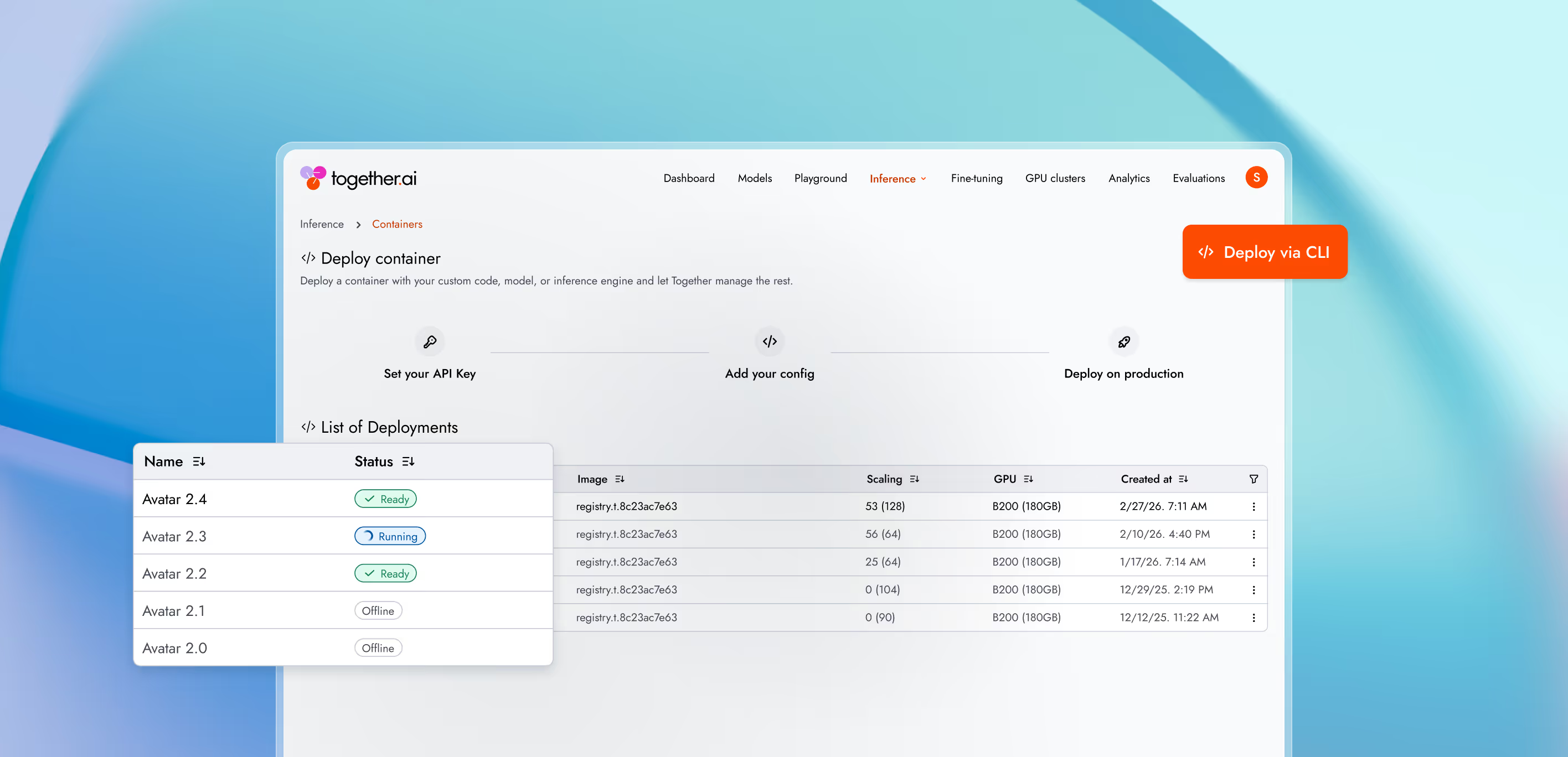

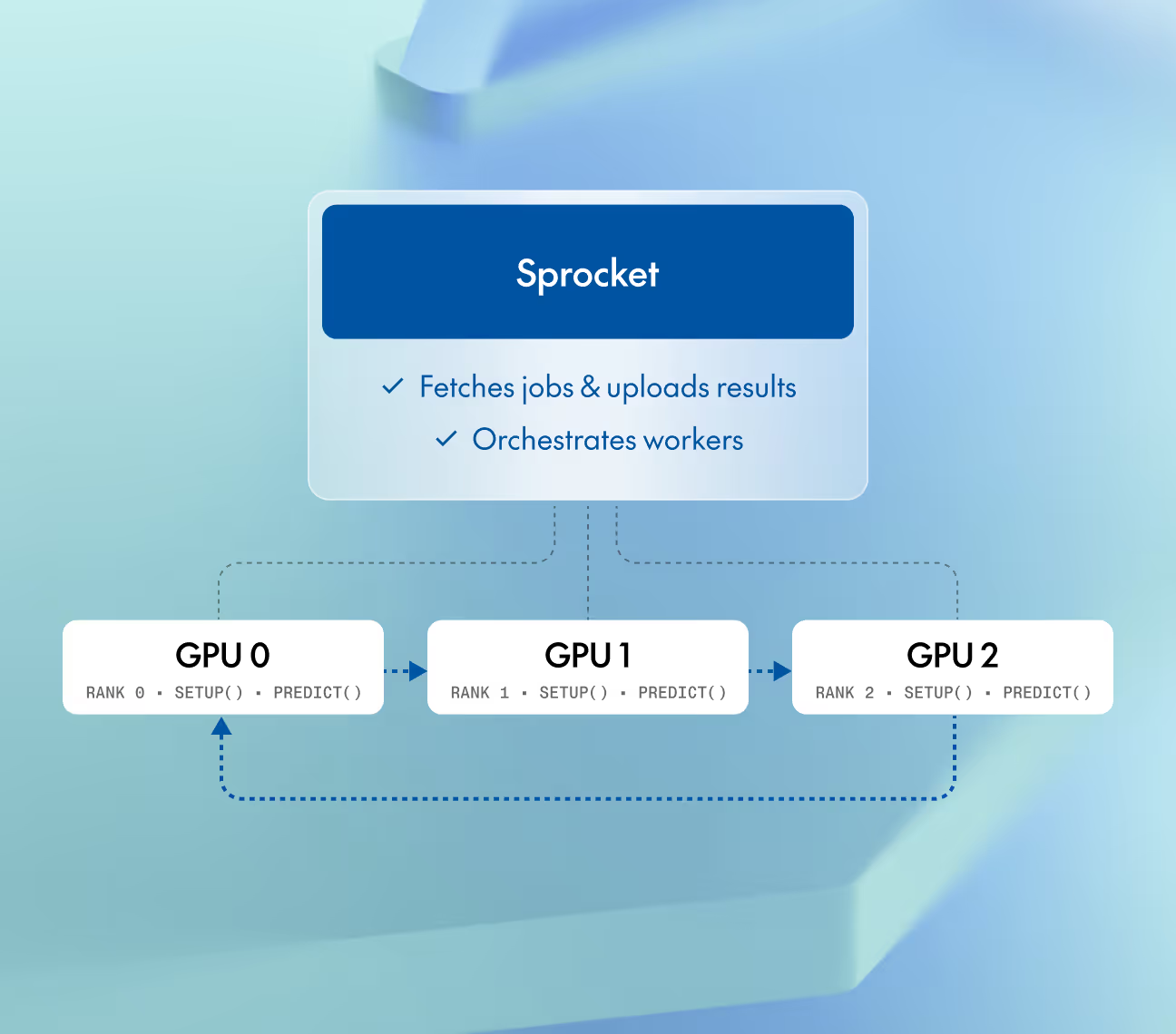

Deploy video generation models across multiple GPUs with a simplified torchrun interface. No manual subprocess management required.

Configure infrastructure and dependencies in pyproject.toml, implement model logic via the Sprocket SDK, and push directly to GPU clusters. Bypass foundational orchestration setup completely.

Powered by leading research

With Dedicated Container Inference, teams benefit from our research pipeline from automatic kernel optimizations to hands-on profiling and tuning for workload-specific performance improvements.

Throughput (TPS)

- Together dedicated container inference

- Previous provider

Together AI vs other providers

2.6x faster

Significant speedup of production video generation models through profiling and optimization, when compared with the next previous provider.

learn moreServer runtime

- inference (17 IMAGE resolutions x 3)

- Server start

together.compile vs alternatives

1.7x faster

together.compile automatically optimizes your kernel stack, delivering the fastest inference and near-instant server start and eliminating the tradeoff between compile-time optimization and cold start speed.

learn more

Deployment options

Run models using different deployment options depending on latency needs, traffic patterns, and infrastructure control.

Real-time

A fully managed inference API that automatically scales with request volume.

Best for

Batch

Process massive workloads of up to 30 billion tokens asynchronously, at up to 50% less cost.

Best for

Dedicated Model Inference

An inference endpoint backed by reserved, isolated compute resources and the Together AI inference engine.

Best for

Dedicated Container Inference

Run inference with your own engine and model on fully-managed, scalable infrastructure.

Best for

Production-grade

security and data privacy

We take security and compliance seriously, with strict data privacy controls to keep your information protected. Your data and models remain fully under your ownership, safeguarded by robust security measures.

NVIDIA preferred partner

NVIDIA preferred partner- AICPA SOC 2 Type II