Frontier AI Factory

Manufacture intelligence at industrial scale

Forge the AI frontier: trillion-parameter models, trillion-token inference, and efficient orchestration of 1K → 100K+ GPUs.

Why Together AI Factory

Industrial-grade AI infrastructure, custom-built for your AI projects.

NVIDIA Blackwell GPUs

NVIDIA's latest accelerated computing platform, like the NVIDIA GB200 NVL72 and NVIDIA HGX B200, tuned peak performance, supporting both training and inference.

Accelerated software stack

The Together Kernel Collection includes custom NVIDIA CUDA® kernels, reducing training times and costs with superior throughput.

Massive scale

Deploy 1000→ 100K+ NVIDIA GPUs across global locations, adapting to evolving workload demands for resilient, enterprise-ready setups.

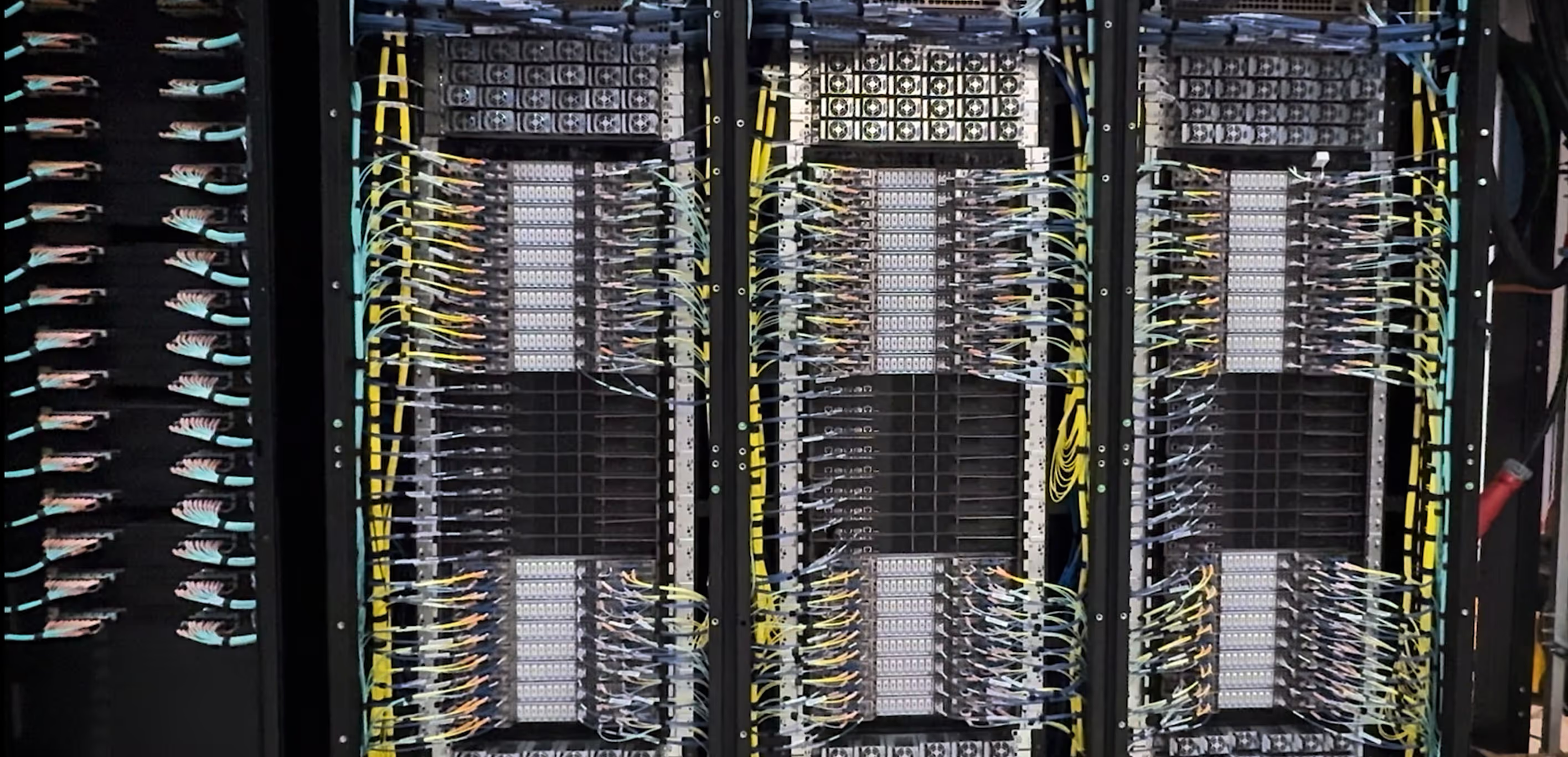

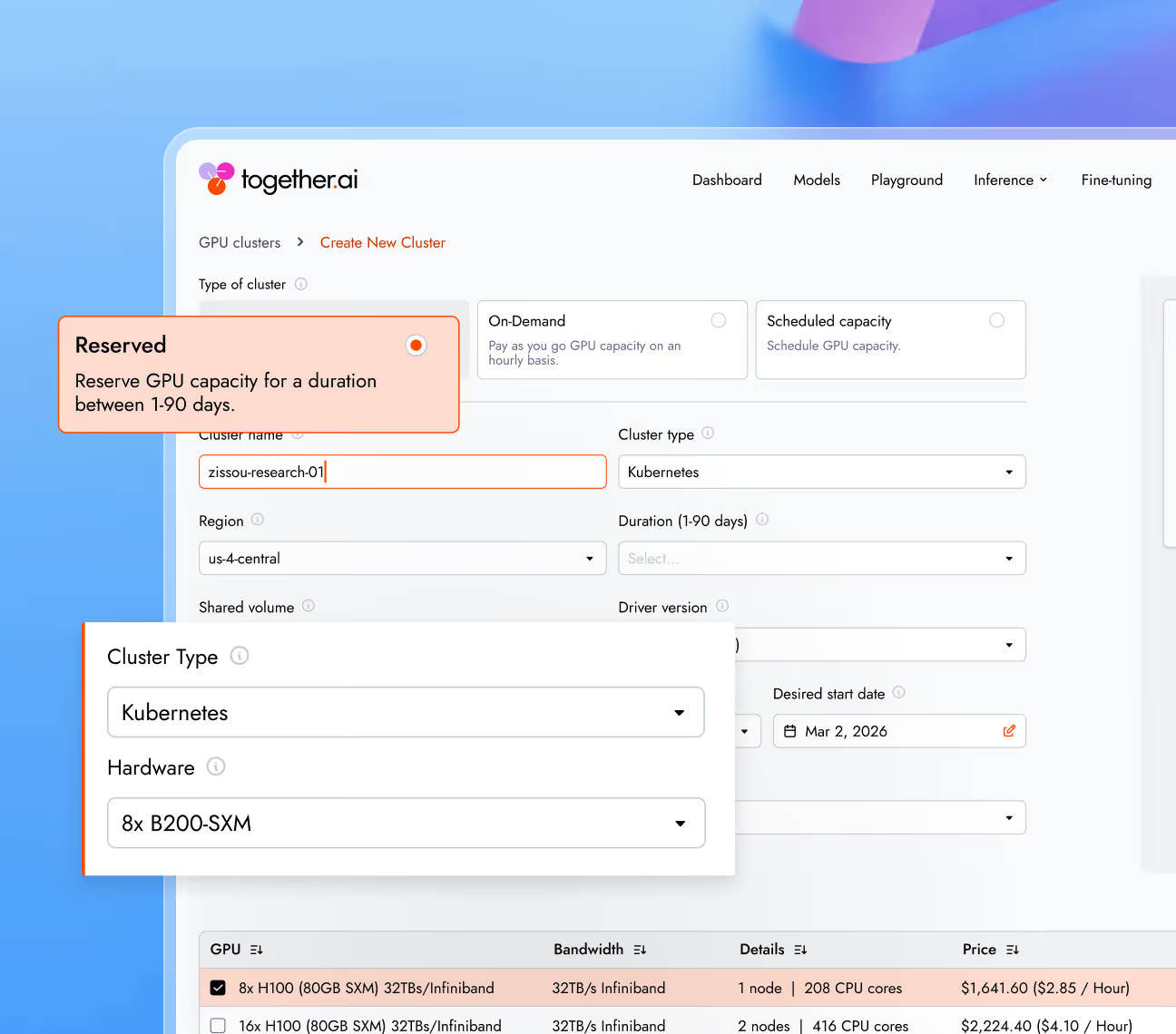

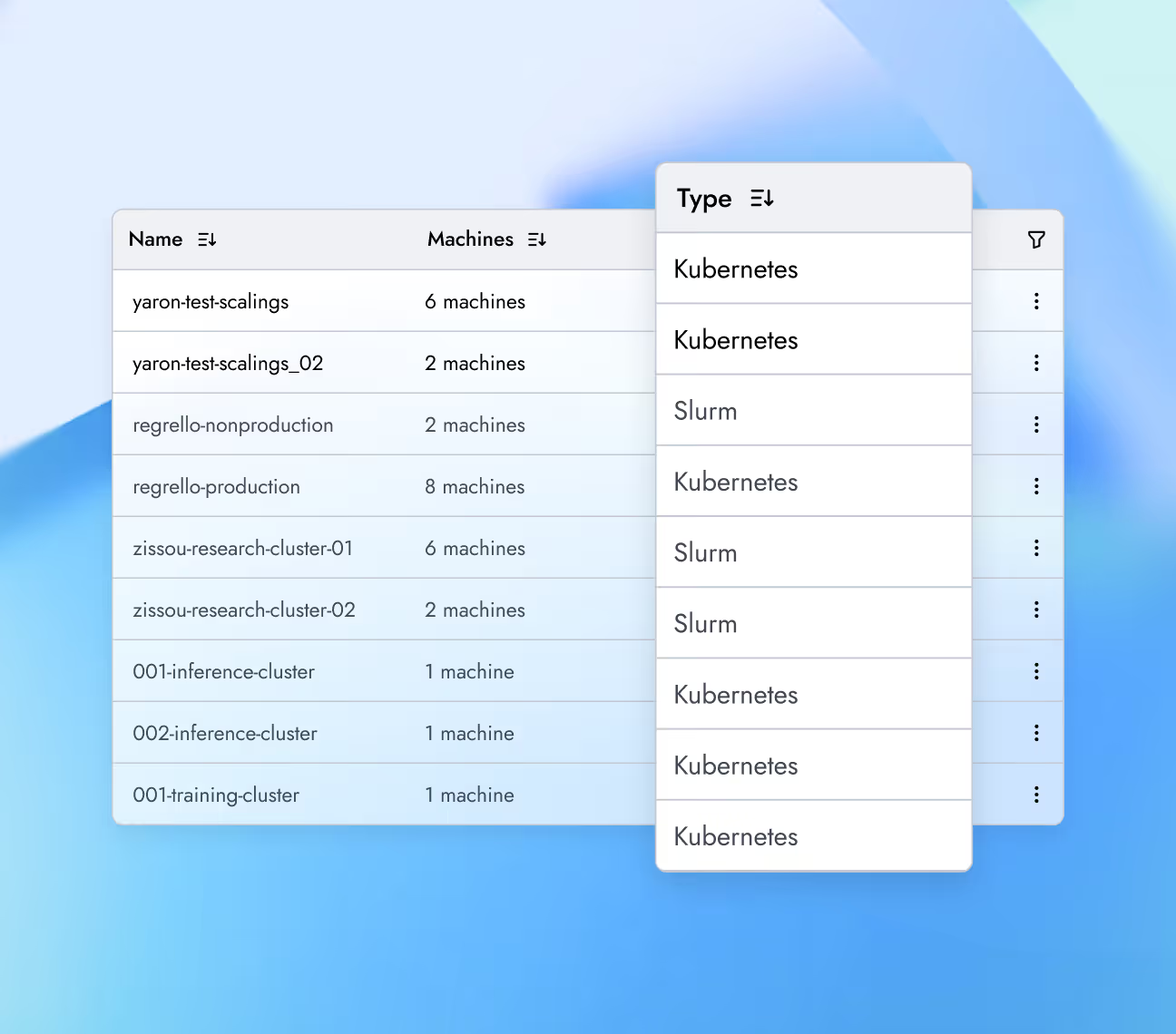

GPU Clusters, built for production

Choose the right GPUs, deploy with the orchestration stack you prefer, and operate with the observability and security required for production.

Deploy GPU clusters with integrated observability, managed orchestration, drivers, and networking entirely pre-configured. Run production workloads instantly without manual infrastructure setup.

Deploy Kubernetes for open-source extensibility, or run Slurm for precise hardware control and gang scheduling. Both fully managed.

Keep workloads running through hardware events using automated remediation and continuous health checks. Every GPU passes rigorous acceptance testing before joining the cluster.

Frontier research-powered training performance

The Together Kernel Collection, built by our Chief Scientist Tri Dao (creator of FlashAttention), delivers improved training and inference performance.

AI Training Performance: NVIDIA Hopper to Blackwell, with TKC

TKC vs SOTA Approaches

90% faster training

Training a 70B parameter Llama-architecture model (BF16) with an optimized TorchTitan + Together Kernel Collection (TKC) reached 15,264 tokens/second/GPU on NVIDIA HGX B200, up from 8,080 tokens/second on NVIDIA HGX H100—a 90% jump in training speed.

learn moreFP8 GEMM Performance (M x N x K)

- ThunderKittens B200

- cuBLAS H100

- cuBLASB200

ThunderKittens vs cuBLAS

~2× faster

ThunderKittens’ FP8 kernel for NVIDIA HGX B200 matches NVIDIA cuBLAS GEMM performance while delivering ~2× speedup over H100 FP8 GEMMs, leveraging Blackwell’s Tensor Core–accelerated matrix operations.

learn more

Orchestration flexibility for your AI workloads

Self-serve GPUs with hourly pricing per GPU.

- Managed KubernetesFor training and inferenceKubeadm-based upstream-compliant K8sNode autoscaling for elastic computeManaged Grafana for observabilityFlexible ingress configuration for inferenceHA control plane with managed upgradesCert Manager for HTTPS endpointsGet started

- Slurm on KubernetesFor training workloadsPrecise hardware control and gang schedulingSubmit jobs via srun, sbatchDirect SSH access with Slurm simplicity and K8s-backed resilienceEssential for distributed training synchronizationGet started

Regions and availability zones

- USA2GW+ in the portfolio with 600MW of near-term capacity in US.

- Europe150 MW+ available in Europe: UK, Spain, France, Portugal, and Iceland also.

- Asia & Middle EastOptions available based on the scale of the projects in Asia and the Middle East.

Choose from global regions to meet data residency and compliance requirements—HIPAA for healthcare, GDPR for Europe, or banking regulations.

Infrastructure you can trust at scale.

Production-grade security.

We take security and compliance seriously, with strict data privacy controls to keep your information protected. Your data and models remain fully under your ownership, safeguarded by robust security measures.

NVIDIA preferred partner

NVIDIA preferred partner- AICPA SOC 2 Type II