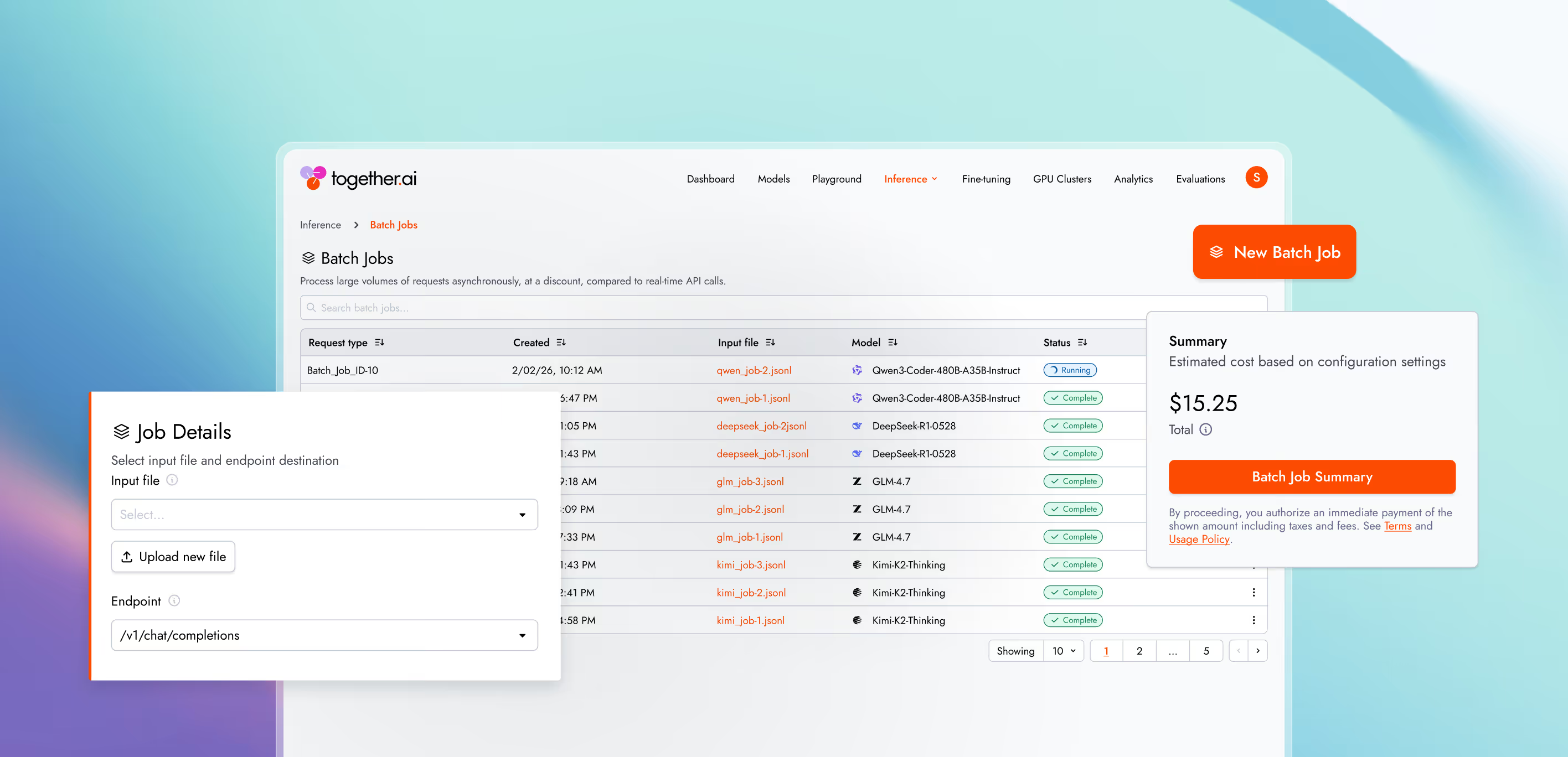

Batch Inference

Process massive workloads asynchronously

Scale to 30 billion tokens per model with any serverless model or private deployment.

Why Batch Inference with Together AI?

Lower cost, higher limits, and predictable processing

Up to 50% cost savings

Run batch jobs at up to half the cost of our real-time API for most serverless models. Process millions of requests economically without sacrificing quality or speed.

30B enqueued tokens per model

Run massive batch jobs that scale to 30 billion enqueued tokens per model per user. Need more? We'll customize limits for your specific use case.

<24h processing time SLA

Jobs consistently finish well under 24 hours — often within just hours. Submit and forget while we handle the scale.

Key capabilities, purpose built for AI natives

Run massive asynchronous inference jobs against a serverless model or dedicated model inference.

Run batch jobs across any serverless model or private deployment — no limitations on model choice.

Launch massive inference jobs simply by uploading a JSONL file. Start processing batches with just a few clicks. No orchestration or monitoring setup required.

Run batch jobs at up to half the cost of our real-time API for most serverless models. Process millions of requests without sacrificing quality or speed.

Research-optimized, best-in-class performance

We achieved up to 2x faster serverless inference for the most demanding LLMs, including GPT-OSS, Qwen, Kimi, and DeepSeek.

Output speed: gpt-oss-20B (low) providers

Together AI

Vexteer

Lightning

DatabricKs

Nebius base

Novita

Amazon

Cloudfare

Hyperbolic

Together AI vs other providers

2x faster

We achieved nearly 2x faster serverless inference performance for gpt-oss-20B when compared with the next fastest provider.

learn moreOutput Speed: Qwen3 235B 2507 providers

Together AI (FP8)

Amazon

Lightning

DatabricKs

Nebius base

Novita

Amazon

Cloudfare

Hyperbolic

Hyperbolic

Hyperbolic

Hyperbolic

Together ai vs other providers

2.75x faster

We achieved nearly 2x faster serverless inference performance for gpt-oss-20B when compared with the next fastest provider.

learn moreOutput Speed: Kimi K2 0905 providers

Together AI

Fireworks

Baseten (FP4)

Parasail

Deepinfra

Novita

Together ai vs other providers

65% faster

We achieved over 65% faster serverless inference performance forKimi-K2-0905 when compared with the next fastest provider.

learn moreOutput Speed: DeepSeek V3.1 providers

Together AI

Fireworks

Baseten (FP4)

Vertex

Parasail(FP8)

Lightning AI

Amazon

GMI (FP8)

Novita

Deepinfra(FP4)

Together ai vs other providers

10% faster

We achieved over 10% faster serverless inference performance forDeepSeek-V3.1 when compared with the next fastest provider.

learn moreOutput Speed: DeepSeek R1 0528 providers

Together AI

Neibus fast(FP4)

Fireworks Fast

Vertex

Azure

Together.ai (Throughput)

Hyperbolic

Deepinfra

Novita

Parasail

Nebius

Together ai vs other providers

13% faster

We achieved over 13% faster serverless inference performance forDeepSeek-R1-0528 when compared with the next fastest provider.

learn more

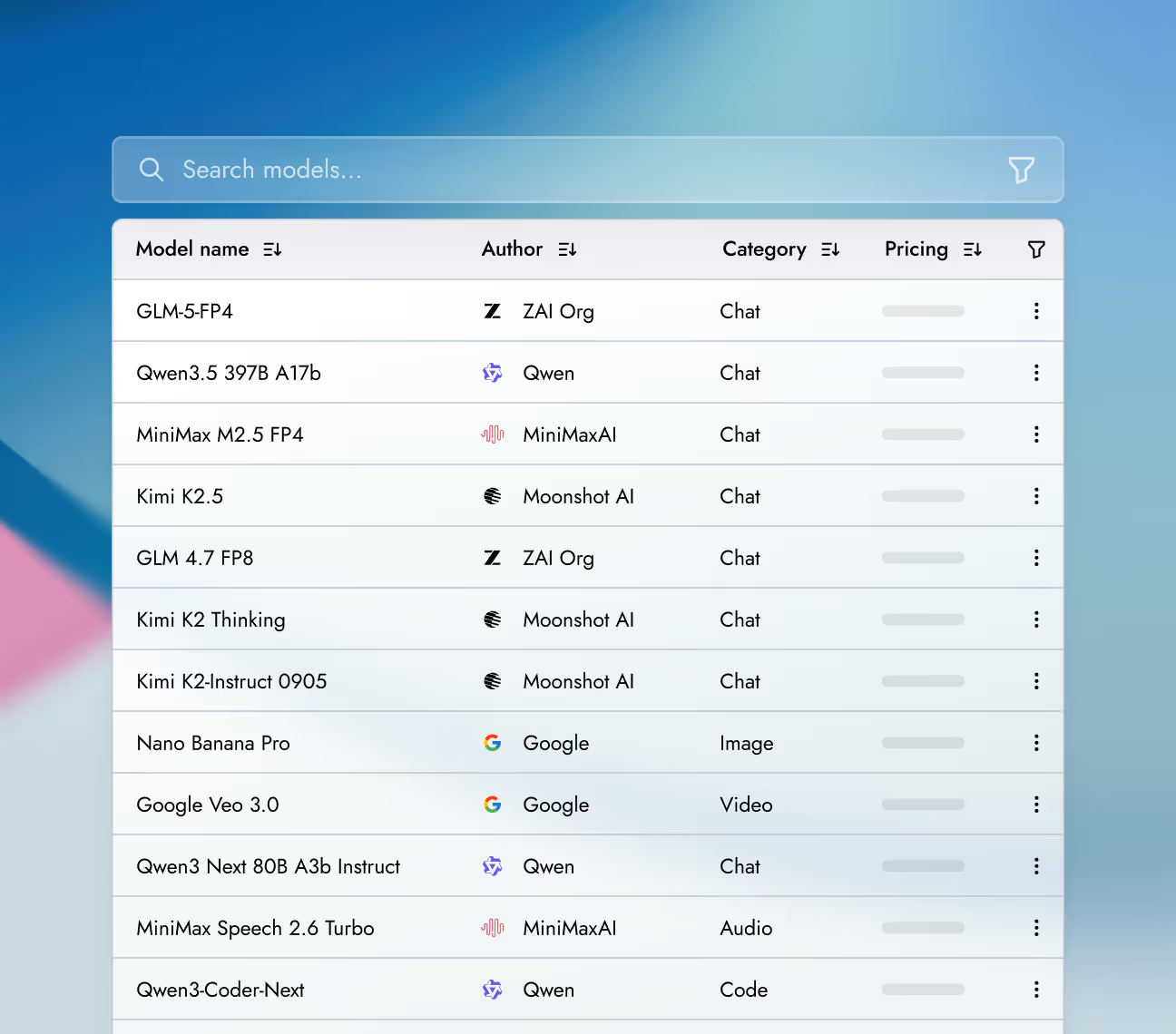

Deployment options

Run models using different deployment options depending on latency needs, traffic patterns, and infrastructure control.

Real-time

A fully managed inference API that automatically scales with request volume.

Best for

Batch

Process massive workloads of up to 30 billion tokens asynchronously, at up to 50% less cost.

Best for

Dedicated Model Inference

An inference endpoint backed by reserved, isolated compute resources and the Together AI inference engine.

Best for

Dedicated Container Inference

Run inference with your own engine and model on fully-managed, scalable infrastructure.

Best for

Production-grade

security and data privacy

We take security and compliance seriously, with strict data privacy controls to keep your information protected. Your data and models remain fully under your ownership, safeguarded by robust security measures.

NVIDIA preferred partner

NVIDIA preferred partner- AICPA SOC 2 Type II