Dedicated Model Inference

Deploy models on dedicated infrastructure, engineered for speed

Purpose-built for teams who need control and the best economics in the market.

Why Dedicated Inference with Together AI?

Designed for production workloads that need consistent performance and operational control.

Built for production inference

Scale to thousands of GPUs for always-on, production inference deployments.

Industry-leading unit economics

We provide the fastest deployments, enabling best price-performance on top GPUs.

Powered by frontier AI systems research

We continuously roll out the latest innovations to keep your deployments running fast.

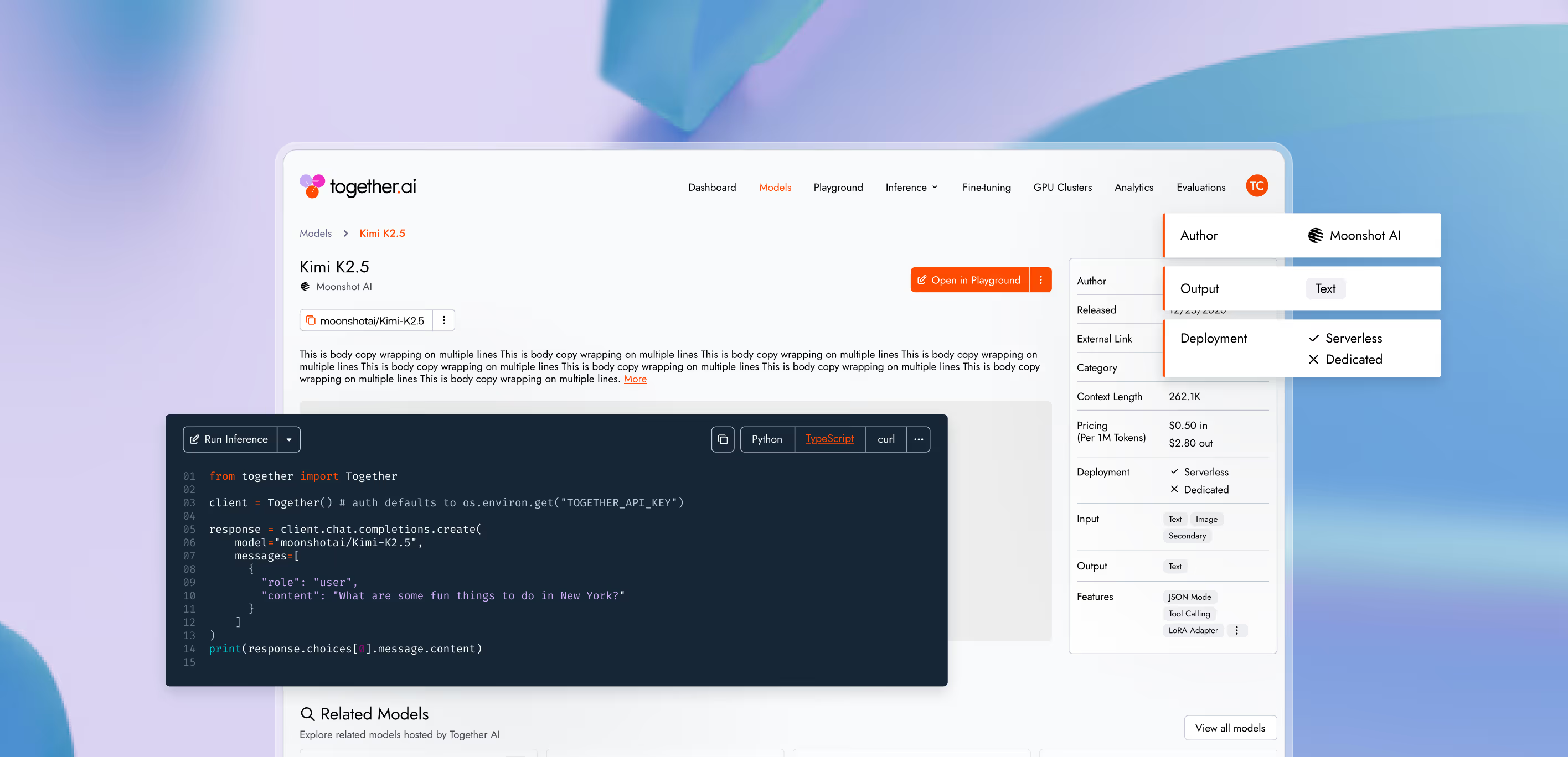

Build with leading models

Explore top-performing models across text, image, video, code, and voice.

Key capabilities, purpose built for AI natives

Scale from self-serve instant clusters to thousands of GPUs, all optimized for better performance with Together Kernel Collection.

Cut latency on dedicated infrastructure with ATLAS — Together's AdapTive-LeArning Speculative System. Predict and validate multiple tokens per step to accelerate workloads continuously. No decoding bottlenecks.

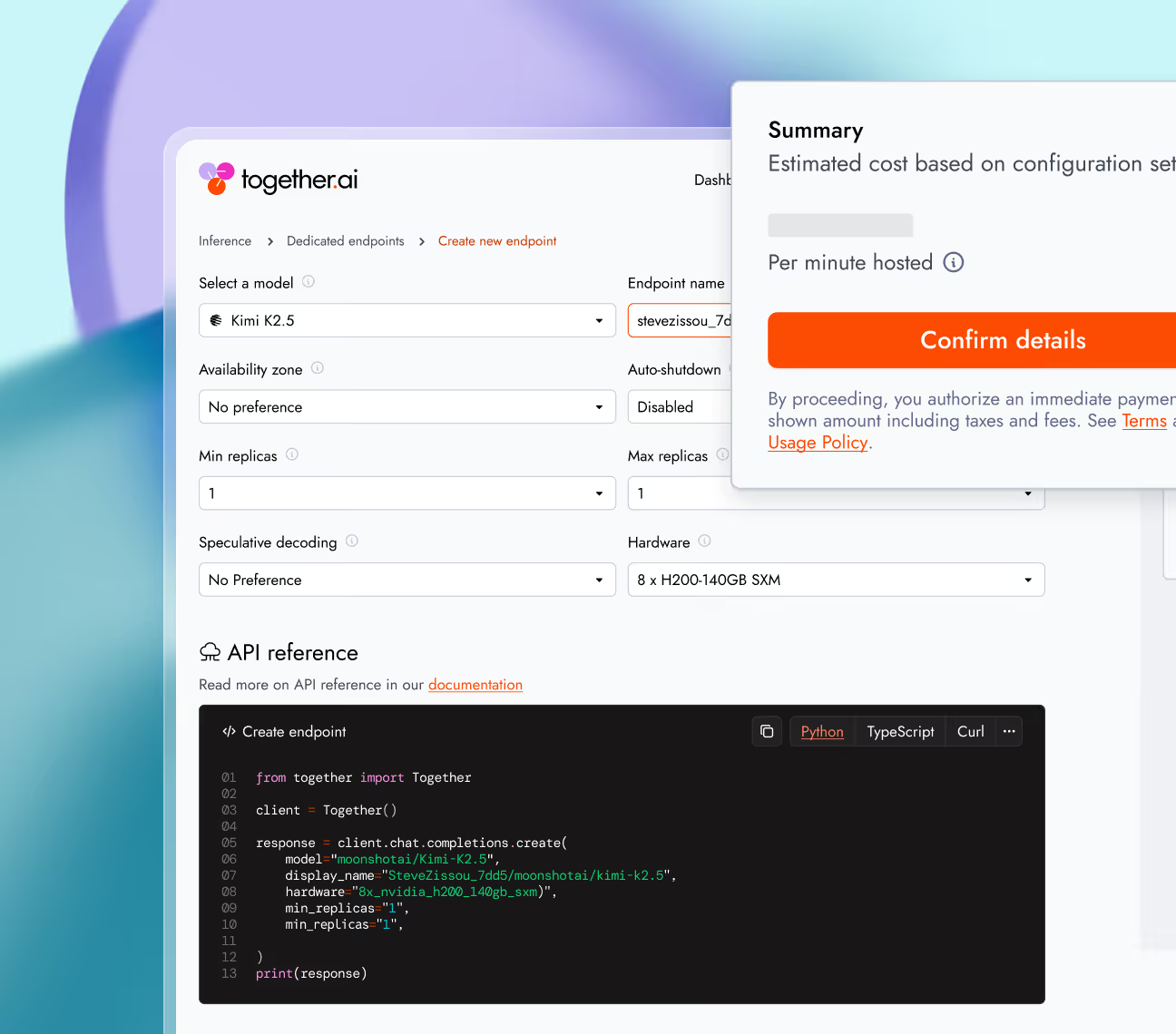

Launch dedicated endpoints in minutes by selecting a target model and hardware configuration. Establish production-ready inference environments without requiring deep infrastructure expertise.

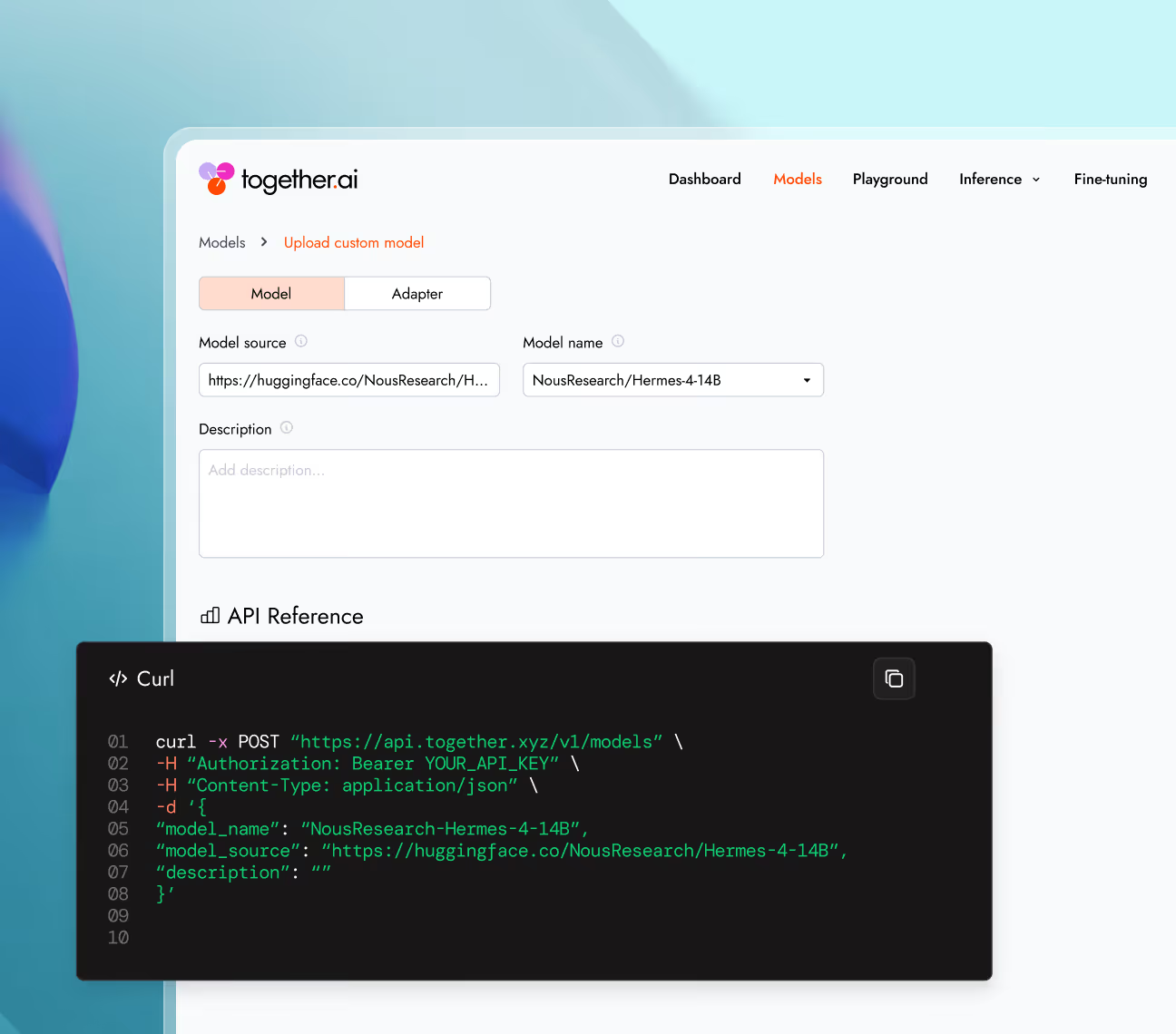

Deploy custom models directly from Hugging Face or S3 onto dedicated endpoints via the UI or CLI. Maintain complete ownership while offloading infrastructure management.

Research that ships

Our research team doesn't just publish. They build the optimizations that power every inference request.

Performance on DeepSeek V3.1 (Arena Hard)

- Atlas

- Static Speculator

- No Speculator

ATLAS performance

3.18x faster

ATLAS, our AdapTive-LeArning Speculator System, continuously learns from live traffic — outperforming static speculators and specialized hardware.

learn moreCPD improves sustainable QPS by 35-40%

- CPD

- Baseline

Together AI CPD vs 2P1D

+40% throughput

Long-context inference without the latency penalty. CPD (cache-aware prefill-decode disaggregation) separates warm and cold requests, cutting time-to-first-token and boosting throughput by up to 40%.

learn moreTime to first 64 tokens

- Megakernel (H100)

- Baseline (B200)

Megakernel vs baseline

Up to 3.6x faster

Megakernel fuses an entire model's forward pass into a single GPU kernel. Made using the ThunderKittens framework, Megakernel eliminates the idle gaps between operations that rob GPUs of their full potential.

learn moreBF16 all-reduce sum performance (on 8x NVIDIA B200s)

- PK

- NCCL

ParallelKittens vs NCCL

Up to 1.79x faster

ParallelKittens—an extension to ThunderKittens for multi-GPU workloads developed in collaboration with Stanford's Hazy Lab—cuts the synchronization overhead that large multi-GPU models pay on every single forward pass.

learn more

Deployment options

Run models using different deployment options depending on latency needs, traffic patterns, and infrastructure control.

Real-time

A fully managed inference API that automatically scales with request volume.

Best for

Batch

Process massive workloads of up to 30 billion tokens asynchronously, at up to 50% less cost.

Best for

Dedicated Model Inference

An inference endpoint backed by reserved, isolated compute resources and the Together AI inference engine.

Best for

Dedicated Container Inference

Run inference with your own engine and model on fully-managed, scalable infrastructure.

Best for

Production-grade

security and data privacy

We take security and compliance seriously, with strict data privacy controls to keep your information protected. Your data and models remain fully under your ownership, safeguarded by robust security measures.

NVIDIA preferred partner

NVIDIA preferred partner- AICPA SOC 2 Type II