Video has become one of the most popular mediums for information sharing. Yet, the language distribution of popular video contents on the internet does not necessarily reflect the diversity of global audiences. For example, a prior study found that 66% of videos from the top 250 YouTube channels are in English, while Spanish, the second most common language, accounts for only 15% [1,2], leaving much of this content inaccessible to viewers around the world. This gap highlights the need for scalable video translation solutions.

Can cutting-edge AI help break down language barriers, making video content more accessible to global audiences?

Today, we are excited to introduce Violin — a fully open-source video translation tool, powered by Together API. The violin pipeline uses state-of-the-art speech recognition, large language models, and speech synthesis to achieve high-quality video translation.

Beyond standard translation, we develop interactive and personalized features, such as a video-content–aware chat assistant and natural language voice picker. We hope Violin can empower users across languages to access information more easily and can help high-quality video content travel further across the web.

Violin: Breaking the language barriers of video sharing

To illustrate Violin’s capabilities, we took a recent technical talk from Together AI and translated it into a different language.

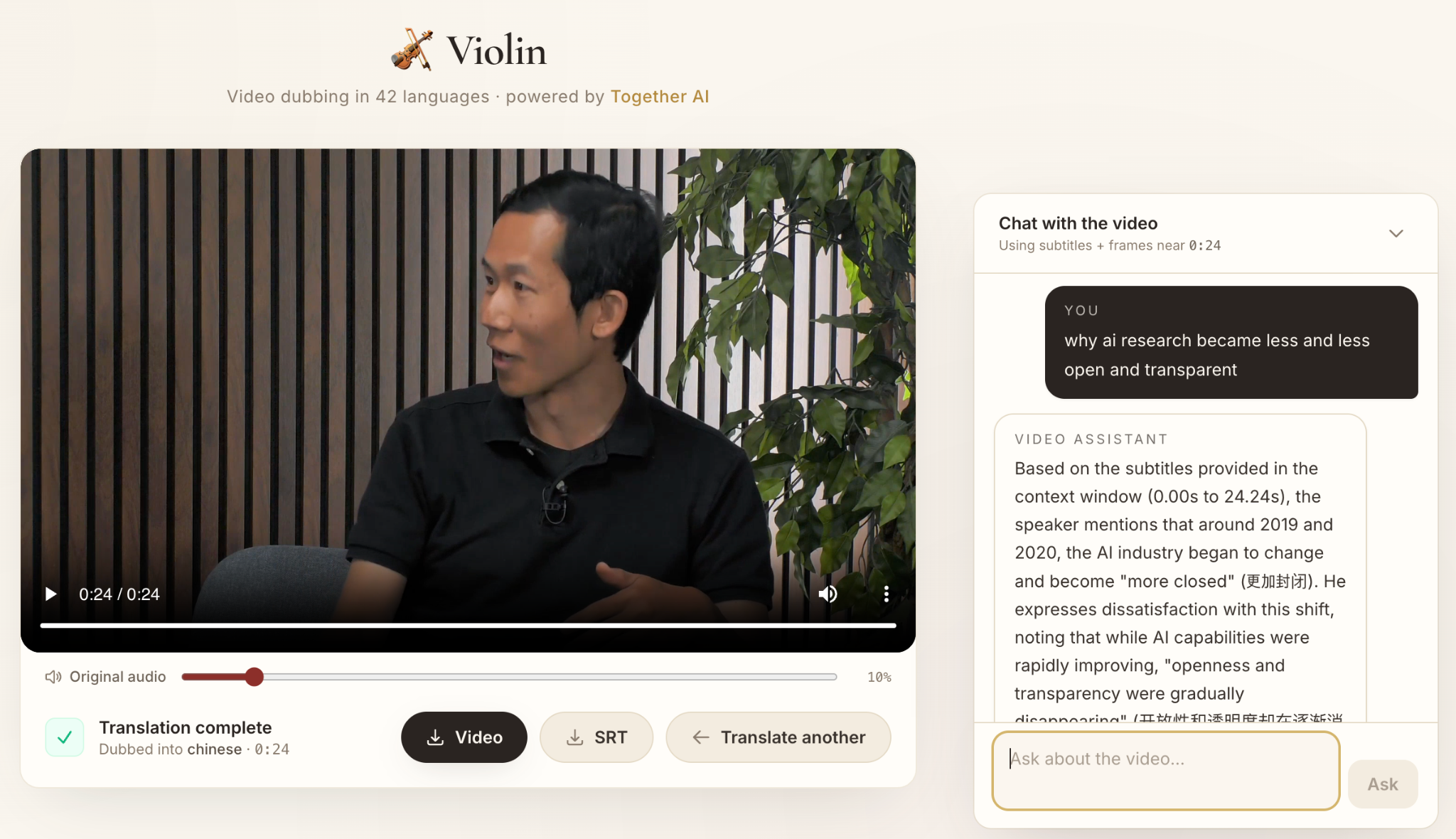

Chat with the video. Violin also includes a built-in multimodal chat assistant that can answer questions based on the video’s content. Users can query details from the video, ask for summaries, or dive deeper into specific topics — all within the same interface.

The Violin Video Assistant: Ask any question about the video, and get answers grounded in the audio and visual contents.

How Violin works

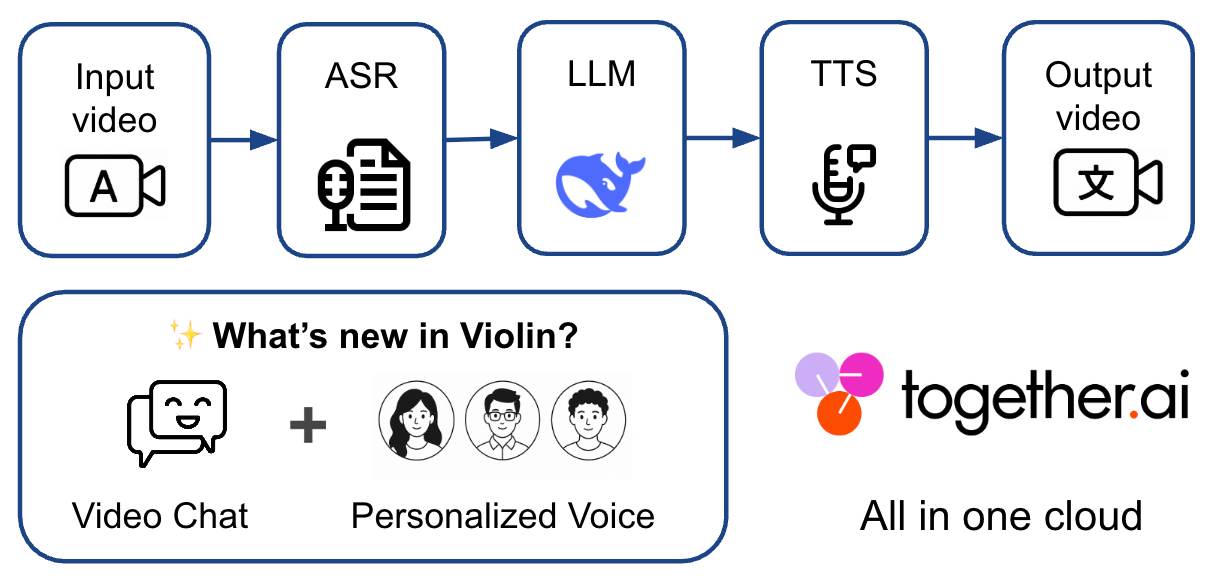

How Violin Works: From input video to fully translated output, Violin orchestrates three core stages: ASR (Automatic Speech Recognition), LLM translation, and TTS (Text-to-Speech) voice synthesis, while supporting Video chat assistant, and voice style personalization. All in Together AI cloud.

Violin works in three straightforward stages:

First, it extracts and transcribes the video's audio into timestamped text. We use Together’s Whisper V3 large endpoint that provides high quality multi-lingual transcription at an optimized speed.

Then a large language model translates that transcript. Here we leverage the latest advances of Deepseek V4 Pro as default translator. We also enable the user's input of a predefined list of translation rules to maintain the faithfulness and accuracy.

Finally, the TTS model generates translated speech, allowing users to specify their desired voice characteristics in plain text. Together-hosted Cartesia’s Sonic 3 supports a wide range of native speaker’s voices such as Korean, Dutch, Italian, and Chinese, making the translated video sound natural. Note that we do not allow voice cloning in our tool, but rather using a distinct voice than the original speaker and by default overlaying the new voice on top of the original voice at a low volume.

Besides, the video chat module lets you ask questions about the video, powered by a vision-language model that understands both what was said in audio and shown on screen. This is implemented by sampling the recent video frame as well as the subtitle context and sent to a vision-language model like Qwen3.5-397B-A17B for free-form question-answering. In this way, the model can return the proper response based on these contexts.

Designed for everyone: Web app, CLI, and agent skills

We built Violin with usability at its core. Whether you’re a content creator who prefers a simple web interface, a developer who lives in the command line, or an AI practitioner integrating tools into autonomous agents, Violin has you covered:

- Web App – A clean, minimal frontend for uploading videos, selecting translation options, previewing results, and interacting with the video assistant. No code required.

- CLI Tool – A straightforward command-line interface for scripting, batch processing, and integration into existing pipelines.

- Agent Skills – We packaged Violin’s capabilities as a skill that can be dropped into common agent frameworks.

Everything — from the GUI to the backend models to the agent skills — is fully open source. We’re releasing the codebase under a permissive MIT license, inviting the community to adapt, extend, and improve. We believe open collaboration is the fastest path toward making video content truly language-agnostic.

Get involved

We’re just getting started, and we’d love your help. If you find Violin useful, or if you have ideas for how it could be better:

- Visit our GitHub repository: github.com/shang-zhu/violin

- Drop us a line at: heyviolinai@gmail.com

- Open a GitHub issue or start a discussion — we value every piece of feedback.

- Try our demo app at: https://violin-ai.com/ (this will be hosted for a short period of time after the release)

Acknowledgments

We are grateful to Martijn Bartelds, Yongchan Kwon, Federico Bianchi, and Kaitlyn Zhou for their thoughtful feedback. We thank the open-source model builders behind Whisper, DeepSeek, Qwen, and Cartesia, whose work forms the foundation of Violin. Special thanks to Hassan El Mghari and Percy Liang for providing videos and feedback during the development.

Disclaimer

Violin provides the translation tool; users are solely responsible for the content they translate, including compliance with copyright and other applicable laws. Uploaded videos are deleted after 24 hours in the demo app.

[1] Wikipedia, "Languages used on the Internet," accessed May 8, 2026. https://en.wikipedia.org/wiki/Languages_used_on_the_Internet

[2] Brian Yang, "6 Common Features Of Top 250 YouTube Channels," Twinword, accessed May 12, 2026. https://www.twinword.com/blog/features-of-top-250-youtube-channels/