Together Research

Foundational research for production AI

Our research areas

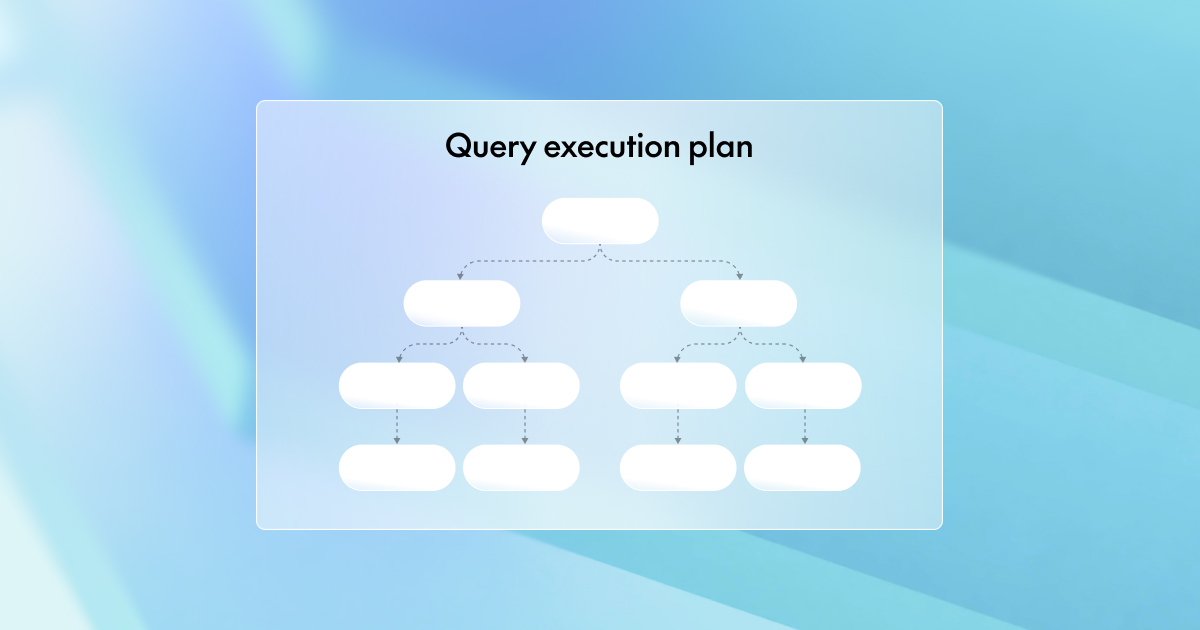

Inference

Design and optimization of production inference systems, spanning scheduling, batching, and hardware–software co-design for reliable high throughput.

Read papers

Kernels

Development of high-performance GPU kernels for training and inference, optimizing memory, attention, and custom operators at production scale.

Read papers

Model Shaping

Advancement of post-training methods like fine-tuning, distillation, and quantization to shape efficient, controllable model behavior.

Read papers

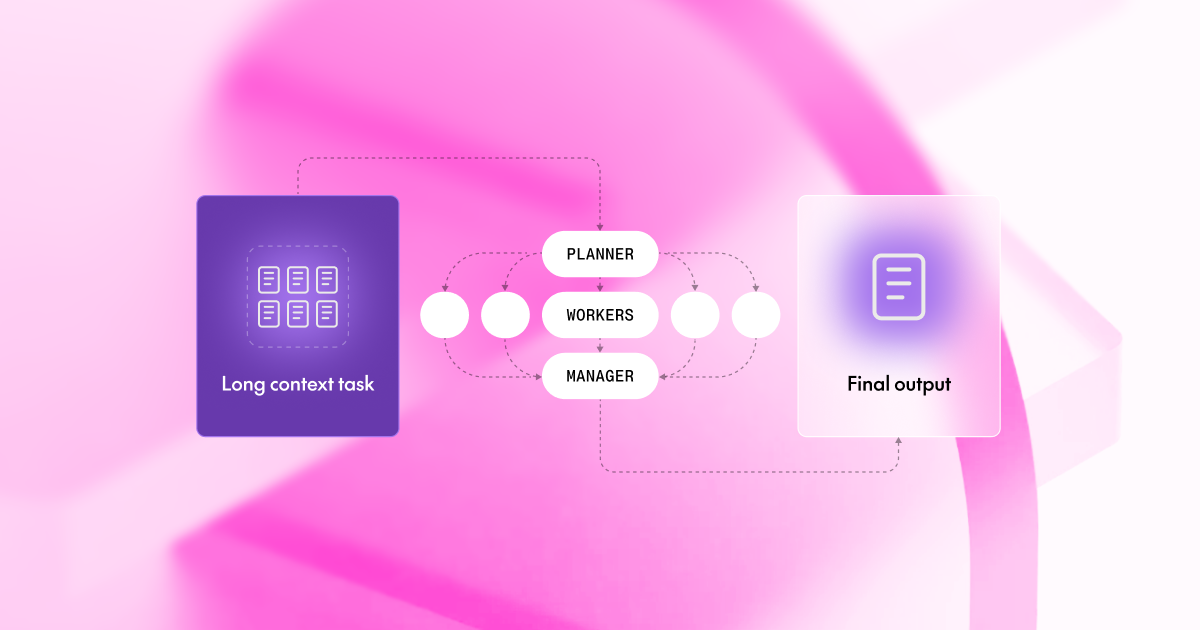

Agents

Studies of long-horizon reasoning and decision-making, focusing on tool use, multi-step planning, and reinforcement learning for reliable agentic systems.

Read papers

Recognized research

Papers accepted at top conferences

Key open-source projects

FlashAttention

IO-aware exact attention, universally adopted

Flash Decoding

8× faster long-context token generation

Mixture of Agents

Open models, working together, beat GPT-4o

Dragonfly

Tiny 8B model beats Med-Gemini on every benchmark

Red Pajama Datasets

100T+ tokens powering 500+ models

DeepCoder

First open model to match o3-mini on code

Open Deep Research

Open-source multi-model deep research agent

Open Data Scientist Agent

Autonomous agent tops Adyen's real-world benchmark

Research blogs

In the spotlight

At Slush 2025, Together AI VP of Kernels Dan Fu dives into building, using, and managing AI agents.

00:00

/

00:00

Research team

Researchers and engineers pushing the boundaries of AI

Ce Zhang

Founder & CTO

Chris Ré

Founder

Tri Dao

Founder & Chief Scientist

Percy Liang

Founder

Ben Athiwaratkun

Core ML

Dan Fu

Kernels

James Zou

Frontier Agents

Leon Song

Core ML

Max Ryabinin

Model Shaping

Simran Arora

Kernels

Yineng Zhang

Inference